Self-hosting LangSmith with Docker

Self-hosting LangSmith is an add-on to the Enterprise Plan designed for our largest, most security-conscious customers. See our pricing page for more detail, and contact us at sales@langchain.dev if you want to get a license key to trial LangSmith in your environment.

This guide provides instructions for installing and setting up your environment to run LangSmith locally using Docker. You can do this either by using the LangSmith SDK or by using Docker Compose directly.

Prerequisites

- Ensure Docker is installed and running on your system. You can verify this by running:

If you don't see any server information in the output, make sure Docker is installed correctly and launch the Docker daemon.

docker info - LangSmith License Key

- You can get this from your Langchain representative. Contact us at sales@langchain.dev for more information.

- LangSmith Version

- Our Docker Compose files are pegged to the latest self-hosted release of LangSmith. If you want to use a different version, you can specify it in the

.envfile or in thedocker-compose.ymlfile.

- Our Docker Compose files are pegged to the latest self-hosted release of LangSmith. If you want to use a different version, you can specify it in the

- OpenAI API Key(optional).

- Used for natural language search feature. Can specify OpenAI key in browser as well for the playground feature.

- Oauth Configuration(optional).

- You can configure oauth using the

.envfile. You will need to provide aclient_idandclient_issuer_urlfor your oauth provider. - Note, we do rely on the OIDC Authorization Code with PKCE flow. We currently support almost anything that is OIDC compliant however Google does not support this flow.

- You can configure oauth using the

- External Postgres(optional).

- You can configure external postgres using the

.envfile. You will need to set thePOSTGRES_DATABASE_URIenvironment variable to the connection string for your postgres instance. - If using a schema other than public, ensure that you do not have any other schemas with the pgcrypto extension enabled or you must include that in your search path.

- Note: We do only officially support Postgres versions >= 14.

- You can configure external postgres using the

- External Redis(optional).

- You can configure external redis using the

.envfile. You will need to set theREDIS_DATABASE_URIenvironment variable to the connection string for your redis instance. - Currently, we do not support using Redis with TLS. We will be supporting this shortly.

- We only official support Redis versions >= 6.

- You can configure external redis using the

Running via Docker Compose

The following explains how to run the LangSmith using Docker Compose. This is the most flexible way to run LangSmith without Kubernetes. In production, we highly recommend using Kubernetes.

1. Fetch the LangSmith docker-compose.yml file

You can find the docker-compose.yml file and related files in the LangSmith SDK repository here: LangSmith Docker Compose File

Copy the docker-compose.yml file and all files in that directory from the LangSmith SDK to your project directory.

- Ensure that you copy the

users.xmlfile as well.

2. Configure environment variables

Copy the .env.example file from the LangSmith SDK to your project directory and rename it to .env. Then, set the following environment variables in the .env file:

# Don't change this file. Instead, copy it to .env and change the values there. The default values will work out of the box as long as you provide your license key.

_LANGSMITH_IMAGE_VERSION=0.2.11 # Change to your desired LangSmith version. Required. Typically you should use the latest version defined in the .env file.

LANGSMITH_LICENSE_KEY=your-license-key # Change to your Langsmith license key. Required

OPENAI_API_KEY=your-openai-api-key # Needed for Online Evals and Magic Query features

AUTH_TYPE=none # Set to "oauth" if you want to use OAuth2.0

OAUTH_CLIENT_ID=your-client-id # Required if AUTH_TYPE=oauth

OAUTH_ISSUER_URL=https://your-issuer-url # Required if AUTH_TYPE=oauth

API_KEY_SALT=super # Change to your desired API key salt. Can be any random value. Must be set if AUTH_TYPE=oauth

POSTGRES_DATABASE_URI=postgres:postgres@langchain-db:5432/postgres # Change to your database URI if using external postgres. Otherwise, leave it as is

REDIS_DATABASE_URI=redis://langchain-redis:6379 # Change to your Redis URI if using external Redis. Otherwise, leave it as is

LOG_LEVEL=warning # Change to your desired log level

MAX_ASYNC_JOBS_PER_WORKER=10 # Change to your desired maximum async jobs per worker. We recommend 10/suggest spinning up more replicas of the queue worker if you need more throughput.

You can also set these environment variables in the docker-compose.yml file directly or export them in your terminal. We recommend setting them in the .env file.

2. Start server

Start the LangSmith application by executing the following command in your terminal:

docker-compose up

You can also run the server in the background by running:

docker-compose up -d

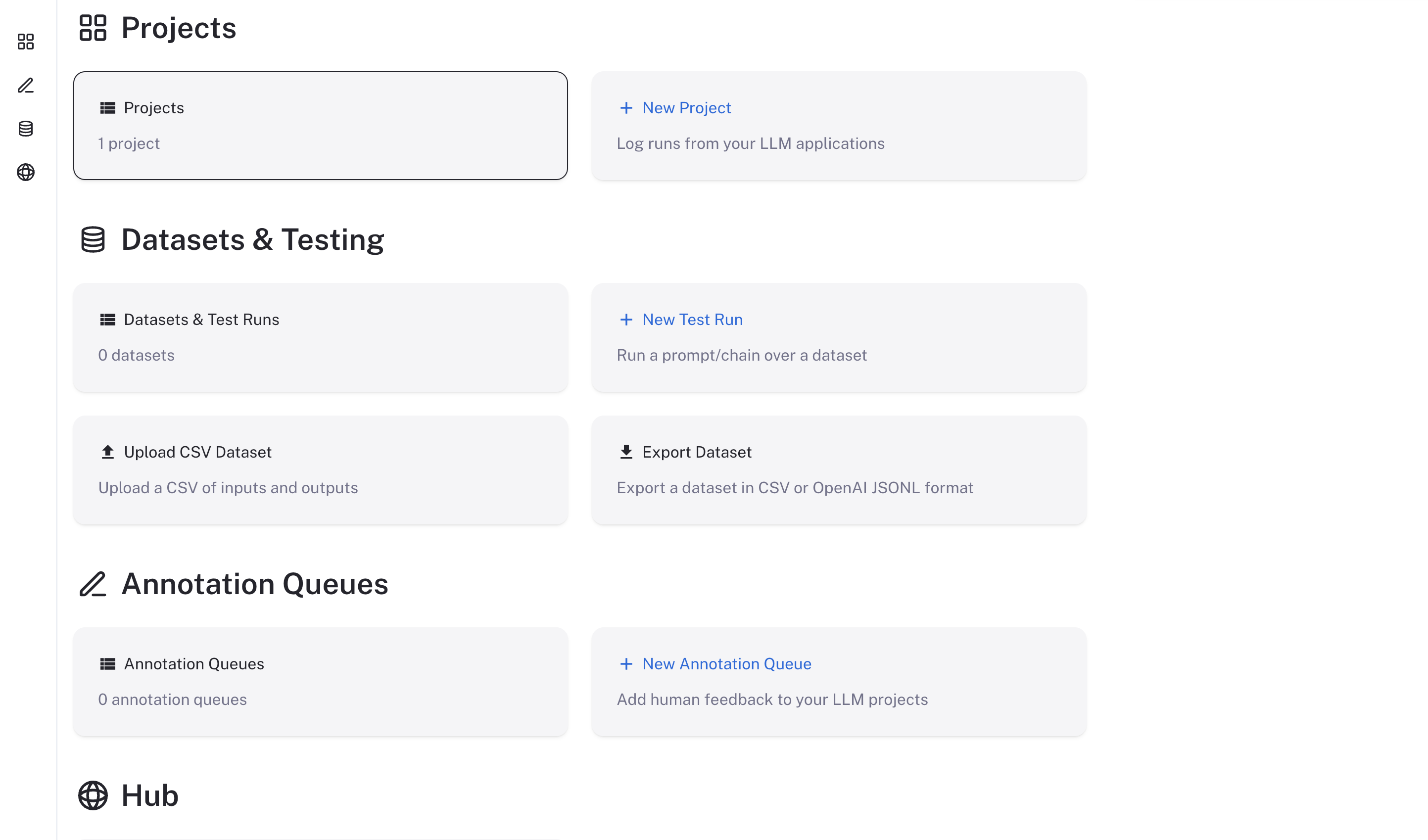

If the server starts correctly, it will open up the UI in your browser at http://localhost.

The LangSmith UI should be visible/operational and look like this:

Stopping the server

docker-compose down

Checking the logs

If, at any point, you want to check if the server is running and see the logs, run

docker-compose logs

Running via the LangSmith SDK

The following explains how to run the LangSmith using the LangSmith SDK. This is a convenient wrapper around the docker-compose command.

We recommend only using this in a local setting as it is not as flexible as using docker-compose directly.

1. Install the LangSmith SDK

The Python LangSmith SDKs exposes a langsmith command line tool.

First, install a recent version of the langsmith package:

- Python/Pip:

pip install -U langsmith

This will install the LangSmith Client and the bundled command line tool.

2. Start server

Start the LangSmith tracing server by executing the following command in your terminal:

langsmith start --langsmith-license-key=<YOUR_LANGSMITH_LICENSE_KEY> --version=<LANGSMITH_VERSION> --openai-api-key=<YOUR_OPENAI_API_KEY>

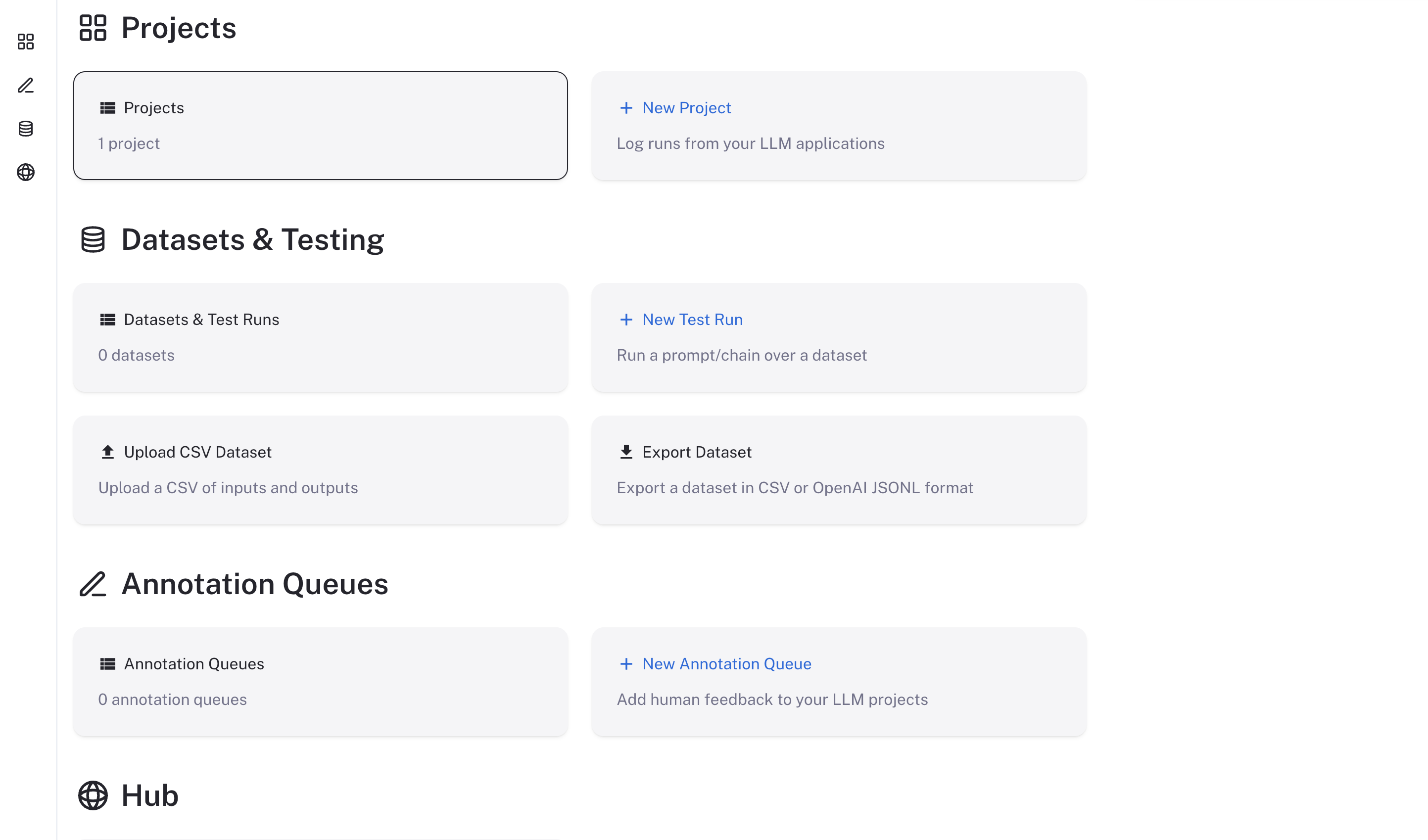

If the server starts correctly, it will open up the UI in your browser at http://localhost.

The LangSmith UI should be visible/operational and look like this:

Stopping the server

To stop the server, run the following command:

langsmith stop

Checking the logs

If, at any point, you want to check if the server is running and see the logs, run

langsmith logs

Using LangSmith

Now that LangSmith is running, you can start using it to trace your code. You can find more information on how to use self-hosted LangSmith in the Self-Hosted Usage Guide.

Frequently Asked Questions

How can we upgrade our application?

- We plan to release new minor versions of the LangSmith application every 6 weeks. This will include release notes and all changes should be backwards compatible. To upgrade, you will need to restart your LangSmith instance specifying the new version.

How can we back up our application?

- The docker solution uses docker volumes to store data. You can back up the data by backing up the volumes.

How can we authenticate to the application?

- Currently, our self-hosted solution supports oauth as an authn solution.

- Note, we do offer a no-auth solution but highly recommend setting up oauth before moving into production.

How can I use External Postgres or Redis?

- You can configure external postgres or redis using the external sections in the

.envfile. You will need to provide the connection url/params for the database/redis instance. Look at the.env.examplefile for more information.

What networking configuration is needed for the application?

- Our deployment only needs egress for a few things:

- Fetching images (If mirroring your images, this may not be needed)

- Talking to any LLMs

- Your VPC can set up rules to limit any other access.

- Note: We require the X-Tenant-Id to be allowed to be passed through to the backend service. This is used to determine which tenant the request is for.

What resources should we allocate to the application?

- We recommend at least 4 vCPUs and 16GB of memory for our application. This is a rough estimate and can vary based on the number of users and the size of the data you are working with.

Increasing the number of replicas

- If you need more throughput, you can increase the number of replicas of the queue worker by running the following command:

This will start 5 replicas of the queue worker. You can change the number to whatever you need. Note that these containers are fairly CPU intensive, and you should ensure you have enough resources to support the number of replicas you are starting.

docker-compose up --scale langchain-queue=5