Tracing Nested Calls within Tools

Tools are interfaces an agent can use to interact with any function. Often those functions include additional calls to a retriever, LLM, or other resource you'd like to trace. To ensure the nested calls are all grouped within the same trace, just use the run_manager!

The following example shows how to pass callbacks through a custom tool to a nested chain. This ensures that the chain's trace is properly included within the agent trace rather than appearing separately.

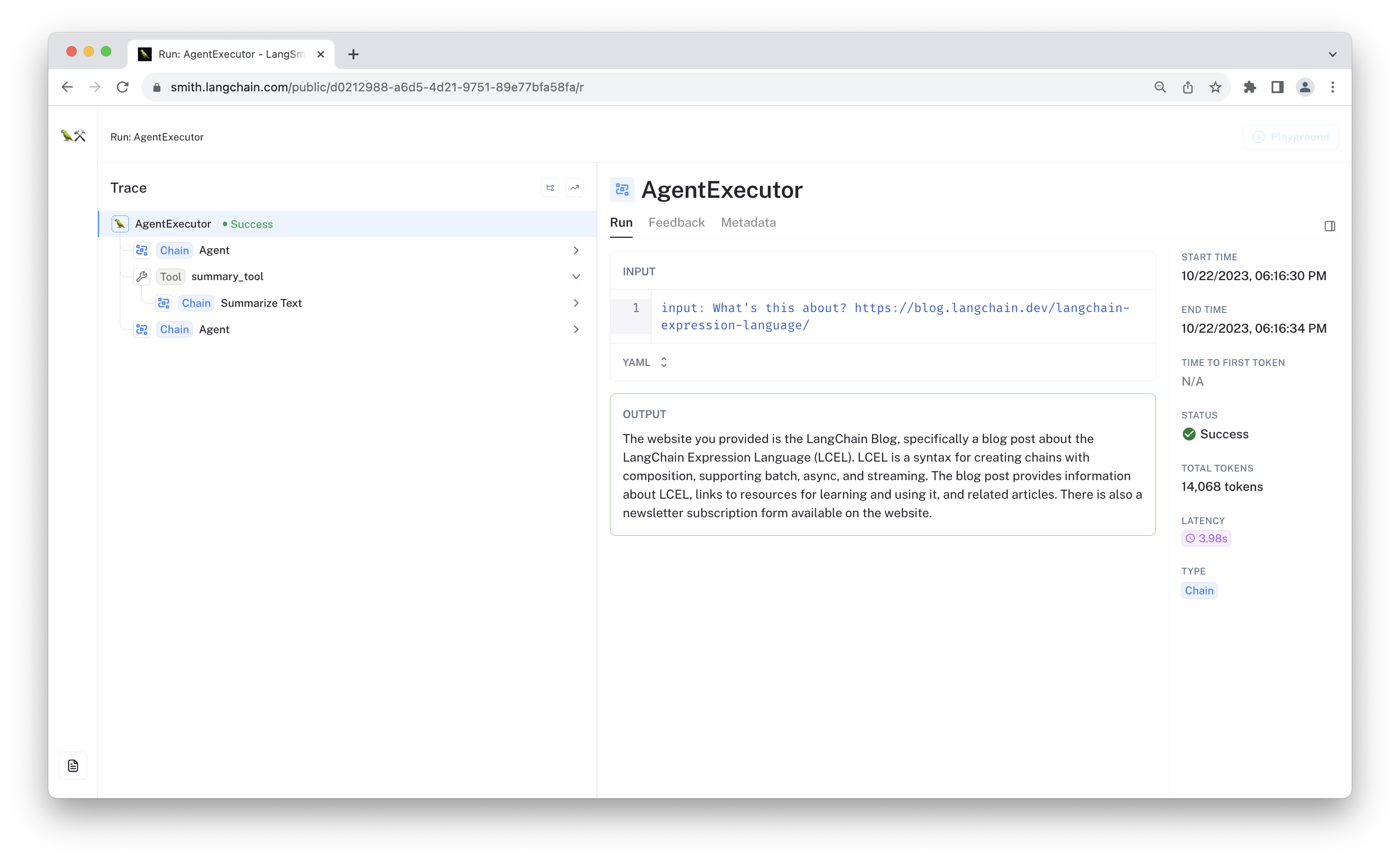

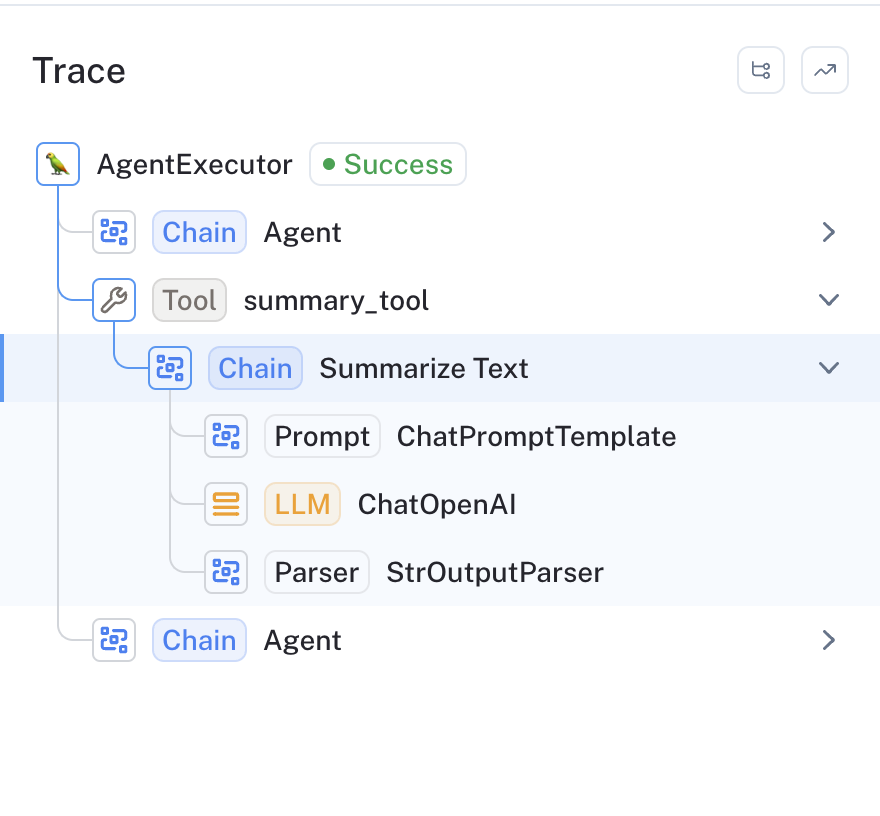

The resulting trace looks like the following:

Prerequisites

This example uses OpenAI and LangSmith. Please make sure the dependencies and API keys are configured appropriately before continuing.

%pip install -U langchain openai

import os

os.environ["LANGCHAIN_API_KEY"] = "<your api key>"

os.environ["LANGCHAIN_TRACING_V2"] = "true"

os.environ["OPENAI_API_KEY"] = "<your openai api key>"

1. Define tool

We will show how to properly structure your nested calls both when using the decorator and when subclassing the BaseTool class.

Option 1: Using the @tool decorator

The @tool decorator is the most concise way to define a LangChain tool. To propagate callbacks

through the tool function, simply include the "callbacks" option in the wrapped function. Below is an example.

import uuid

import requests

from langchain.callbacks.manager import Callbacks

from langchain.chat_models import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain.schema.output_parser import StrOutputParser

from langchain.tools import tool

@tool

def summarize_tool(url: str, callbacks: Callbacks = None):

"""Summarize a website."""

text = requests.get(url).text

summary_chain = (

ChatPromptTemplate.from_template(

"Summarize the following text:\n<TEXT {uid}>\n" "{text}" "\n</TEXT {uid}>"

).partial(uid=lambda: uuid.uuid4())

| ChatOpenAI(model="gpt-3.5-turbo-16k")

| StrOutputParser()

).with_config(run_name="Summarize Text")

return summary_chain.invoke(

{"text": text},

{"callbacks": callbacks},

)

Option 2: Subclass BaseTool

If you directly subclass BaseTool, you have more control over the class behavior and state management. You can choose to propagate callbacks by accepting a "run_manager" argument in your _run method.

Below is an equivalent definition of our tool using inheritance.

import uuid

from typing import Optional

import aiohttp

import requests

from langchain.callbacks.manager import (

AsyncCallbackManagerForToolRun,

CallbackManagerForToolRun,

)

from langchain.chat_models import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain.schema.output_parser import StrOutputParser

from langchain.schema.runnable import Runnable

from langchain.tools import BaseTool

from pydantic import Field

def _default_summary_chain():

"""An LLM chain that summarizes the input text"""

return (

ChatPromptTemplate.from_template(

"Summarize the following text:\n<TEXT {uid}>\n" "{text}" "\n</TEXT {uid}>"

).partial(uid=lambda: uuid.uuid4())

| ChatOpenAI(model="gpt-3.5-turbo-16k")

| StrOutputParser()

).with_config(run_name="Summarize Text")

class CustomSummarizer(BaseTool):

name = "summary_tool"

description = "summarize a website"

summary_chain: Runnable = Field(default_factory=_default_summary_chain)

def _run(

self,

url: str,

run_manager: Optional[CallbackManagerForToolRun] = None,

) -> str:

"""Use the tool."""

text = requests.get(url).text

callbacks = run_manager.get_child() if run_manager else None

return self.summary_chain.invoke(

{"text": text},

{"callbacks": callbacks},

)

async def _arun(

self,

url: str,

run_manager: Optional[AsyncCallbackManagerForToolRun] = None,

) -> str:

"""Use the tool asynchronously."""

async with aiohttp.ClientSession() as session:

async with session.get(url) as response:

text = await response.text()

callbacks = run_manager.get_child() if run_manager else None

return await self.summary_chain.ainvoke(

{"text": text},

{"callbacks": callbacks},

)

2. Define agent

We will construct a simple agent using runnables and OpenAI functions, following the Agents overview in the LangChain documentation. The specifics aren't important to this example.

from langchain.prompts import ChatPromptTemplate, MessagesPlaceholder

prompt = ChatPromptTemplate.from_messages(

[

("system", "You are very powerful assistant, but bad at summarizing thingws."),

("user", "{input}"),

MessagesPlaceholder(variable_name="agent_scratchpad"),

]

)

from langchain.chat_models import ChatOpenAI

from langchain.tools.render import format_tool_to_openai_function

# Configure the LLM with access to the appropriate function definitions

llm = ChatOpenAI(temperature=0)

tools = [summarize_tool, CustomSummarizer()]

llm_with_tools = llm.bind(functions=[format_tool_to_openai_function(t) for t in tools])

from langchain.agents import AgentExecutor

from langchain.agents.format_scratchpad import format_to_openai_functions

from langchain.agents.output_parsers import OpenAIFunctionsAgentOutputParser

agent = (

{

"input": lambda x: x["input"],

"agent_scratchpad": lambda x: format_to_openai_functions(

x["intermediate_steps"]

),

"chat_history": lambda x: x.get("chat_history") or [],

}

| prompt

| llm_with_tools

| OpenAIFunctionsAgentOutputParser()

).with_config(run_name="Agent")

agent_executor = AgentExecutor(agent=agent, tools=tools)

3. Invoke

Now its time to call the agent. All callbacks, including the LangSmith tracer, will be passed to the child runnables.

agent_executor.invoke(

{

"input": f"What's this about? https://blog.langchain.dev/langchain-expression-language/"

}

)

{'input': "What's this about? https://blog.langchain.dev/langchain-expression-language/", 'output': 'The blog post titled "LangChain Expression Language" introduces a new syntax called LangChain Expression Language (LCEL). LCEL allows users to create chains with composition and comes with a new interface that supports batch, async, and streaming. The post also mentions the creation of a "LangChain Teacher" to help users learn LCEL. Examples of how chaining can be used in language models are provided, along with the benefits of using LCEL. The post concludes by mentioning the integration of LCEL with LangSmith, another platform released by LangChain.'}

The resulting LangSmith trace should look something like the following:

Conclusion

In this example, you created a tool in two ways: using the decorator and by subclassing BaseTool. You configured the callbacks so the nested call to the "Summarize Text" chain is traced correctly.

LangSmith uses LangChain's callbacks system to trace the execution of your application. To trace nested components, the callbacks have to be passed to that component. Any time you see a trace show up on the top level when it ought to be nested, it's likely that somewhere the callbacks weren't correctly passed between components.

This is all made easy when composing functions and other calls as runnables (i.e., LangChain expression language).