Runnable PromptTemplates

When you build a chain using LangChain runnables, each component is assigned its own run span.

This means you can modify and run prompts directly in the playground. You can also save and version them in the hub for later use.

In this example, you will build a simple chain using runnables, save the prompt trace to the hub, and then use the versioned prompt within your chain. The prompt will look something like the following:

Using runnables in the playground make it easy to experiment quickly with different prompts and to share them with your team, especially for those who prefer to not dive deep into the code.

Prerequisites

Before you start, make sure you have a LangSmith account and an API key (for your personal "organization"). For more information on getting started, check out the docs.

# %pip install -U langchain langchainhub --quiet

import os

# Update with your API URL if using a hosted instance of Langsmith.

os.environ["LANGCHAIN_ENDPOINT"] = "https://api.smith.langchain.com"

os.environ["LANGCHAIN_API_KEY"] = "YOUR API KEY" # Update with your API key

# Update with your API URL if using a hosted instance of Langsmith.

os.environ["LANGCHAIN_HUB_API_URL"] = "https://api.hub.langchain.com"

os.environ["LANGCHAIN_HUB_API_KEY"] = "YOUR API KEY" # Update with your Hub API key

1. Define your chain

First, define the chain you want to trace. We will start with a simple prompt and chat-model combination.

from langchain import prompts, chat_models, hub

prompt = prompts.ChatPromptTemplate.from_messages(

[

("system", "You are a mysteriously vague oracle who only speaks in riddles."),

("human", "{input}"),

]

)

chain = prompt | chat_models.ChatAnthropic(model="claude-2", temperature=1.0)

The pipe "|" syntax above converts the prompt and chat model into a RunnableSequence for easy composition.

Run the chain below. We will use the collect_runs callback to retrieve the run ID of the traced run and share it.

from langchain import callbacks

with callbacks.collect_runs() as cb:

for chunk in chain.stream({"input": "What is the GIL?"}):

print(chunk.content, end="", flush=True)

run_id = cb.traced_runs[0].id

The GIL is a lock that allows only one thread to execute Python bytecode at a time. Like an oracle speaking in riddles, its true nature is complex and subtle. Though it may seem to limit, the GIL protects the Python interpreter from itself, keeping all in balance. To understand it fully, one must seek with an open and curious mind.

2. Save to the hub

Open the ChatPromptTemplate child run in LangSmith and select "Open in Playground". If you are having a hard time finding the recent run trace, you can see the URL using the read_run command, as shown below.

<img src="./static/chat_prompt.png" alt="Chat Prompt Trace" style="width:75%">

Note: You could also use the hub SDK directly to push changes to the hub by using the hub.push command.

from langsmith import Client

client = Client()

# You can fetch the traced run using this URL when you run it.

# client.read_run(run_id).url

Once opened in the playground, you can run prompt using different models and directly edit the messages to see how they impact the output.

<img src="./static/playground.png" alt="Playground" style="width:75%">

Once you're satisfied with the results, click "Save as" to save the prompt to the hub. You can type in the prompt name and click "Commit"! You don't need to include your handle in the name - it will automatically be prefixed.

<img src="./static/save_prompt.png" alt="Save Prompt" style="width:75%">

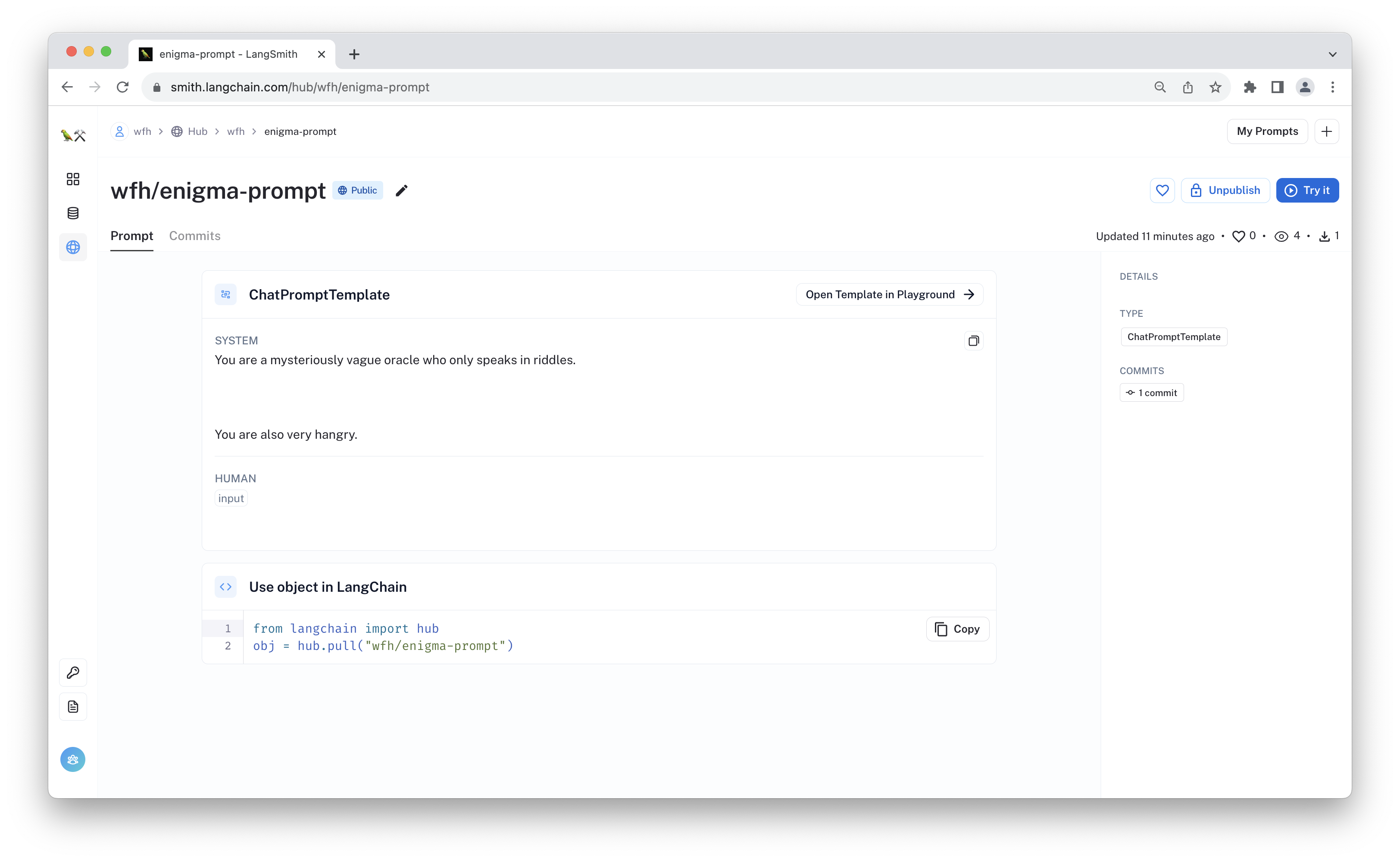

3. Load the prompt

Now you can load the prompt directly in your chain using the hub.pull() command.

from langchain import hub

prompt = hub.pull("wfh/enigma-prompt", api_url="https://api.hub.langchain.com")

chain = prompt | chat_models.ChatAnthropic(model="claude-2", temperature=1.0)

for chunk in chain.stream({"input": "What is the GIL?"}):

print(chunk.content, end="", flush=True)

Here is a riddle about the GIL:

One mind to rule them all, one mind to find them,

One mind to bring them all, and in the darkness bind them.

This singleton stalks the lands of Python fair,

Allowing just one thread past its icy glare.

To share the power is not its intent,

So parallel paths rarely circumvent.

Alas, some clever minds found a way,

To thread beyond its reach during delay.

So the GIL lurks on in CPython's core,

A watchdog o'er shared memory's door.

But other lands now offer escape,

To break free from its single-threaded scrape.

So choose thy CPython with care my friend,

For where it leads, no code can mend.

4. Conclusion

In this walkthrough, you modified an existing prompt run within the playground and saved it to the hub. You then loaded it from the hub to use within your chain.

The playground is an easy way to quickly debug a prompt's behavior when you notice something in your traces. Saving to the LangChain Hub then lets you version and share the prompt with others.